|

This function is part of the SQL standard, and it can be used with most relational database management systems. In the mean time between two counts, use the cached value.Īccuracy: approximation but not too bad in normal circumstances (unless for when thousands of rows are added or deleted).Įfficiency: Very good, the value is always available.MySQL includes a COUNT() function, which allows you to find out how many rows would be returned from a query.

Example: run the exact count query every 10 minutes. It puts however additional load to the database.Į) storing ( caching) the count in the application layer - and using the 1st method (or a combination of the previous methods). This will of course put an additional load in each insert and delete but will provide an accurate count.Įfficiency: Very good, needs to read only a single row from another table. This can be improved by running ANALYZE TABLE more often.Įfficiency: Very good, it doesn't touch the table at all.ĭ) storing the count in the database (in another, "counter" table) and update that value every single time the table has an insert, delete or truncate (this can be achieved with either triggers or by modifying the insert and delete procedures). If the table is the target of frequent inserts and deletes, the result can be way off the actual count. select sql_calc_found_rows * from table_name limit 0 Ĭ) using the information_schema tables, as the linked question: select table_rowsĪccuracy: Only an approximation. I've seen it used for paging (to get some rows and at the same time know how many are int total and calculate the number of pgegs). Can be used instead of the previous way, if we also want a small number of the rows as well (changing the LIMIT).

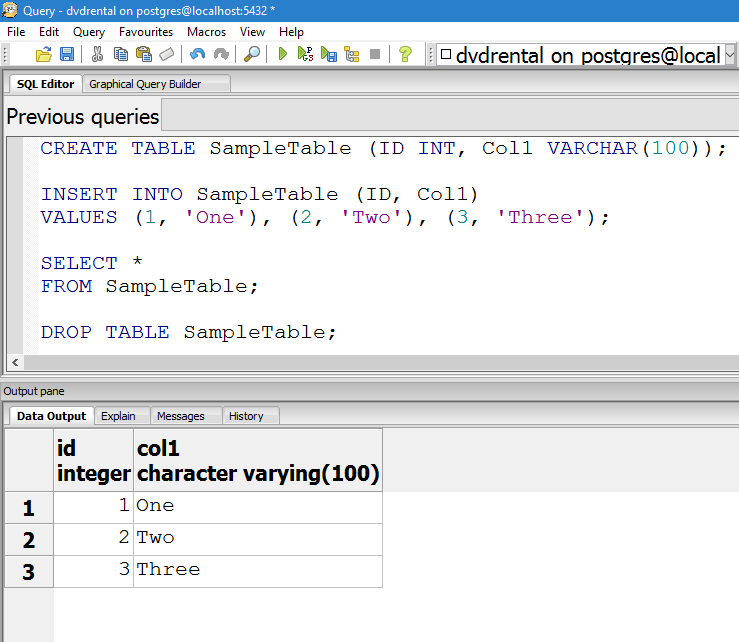

The bigger the table, the slower it gets.ī) using SQL_CALC_FOUND_ROWS and FOUND_ROWS(). The "spectacularly fast" also applies only when counting the rows of a whole MyISAM table - if the query has a WHERE condition, it still has to scan the table or an index.)įor InnoDB tables it depends on the size of the table as the engine has to do either scan the whole table or a whole index to get the accurate count. (for MyISAM tables is spectacularly fast but no one is using MyISAM these days as it has so many disadvantages over InnoDB. select count(*) as table_rows from table_name Īccuracy: 100% accurate count at the time of the query is run.Įfficiency: Not good for big tables. What is best depends on the requirements (accuracy of the count, how often is performed, whether we need count of the whole table or with variable where and group by clauses, etc.)Ī) the normal way.

There are various ways to "count" rows in a table. As it is, I will just get the highest id with SELECT id from table_name order by id DESC limit 1 and hope my tables don't get too fragmented. Nobody is going to care if it's just a case of telling someone how big a table is, but I wanted to pass the row count to a bit of code that would use that figure to create a "equally sized" asynchronous queries to query the database in parallel, similar to the method shown in Increasing slow query performance with the parallel query execution by Alexander Rubin. Does analyze table return immediately and process in the background? I feel its worth mentioning this is a test database and is not currently being written to. When I ran the query again, I now get a much closer result of 34384599 rows, but it's still not the same number as select count(*) with 34906061 rows. I ran ANALYZE TABLE data_302, which completed in 0.05 seconds. Why are the answers different by roughly 2 million rows? I am guessing the query that performs a full table scan is the more accurate number, but is there a way I can get the correct number without having to run this slow query? Unfortunately I'm getting different answers as shown below: To see how much of a performance difference there was. Which I liked because it looks like a lookup instead of a scan, so it should be fast, but I decided to test it against SELECT COUNT(*) FROM table

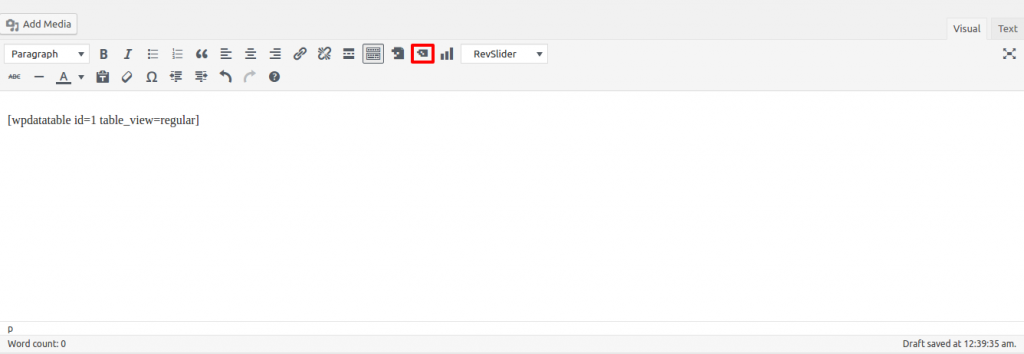

I found the post " MySQL: Fastest way to count number of rows" on Stack Overflow, which looked like it would solve my problem. I want a fast way to count the number of rows in my table that has several million rows.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed